Not All Citations Can Be Trusted

If you’re not familiar with the term “hallucination,” it’s what happens when an AI confidently gives you an answer… that’s completely made up. It might sound convincing. It might even cite a source. But sometimes, a quick Google search is all it takes to realize that study, case, or quote never existed.

That’s the scary part. And it’s not going away.

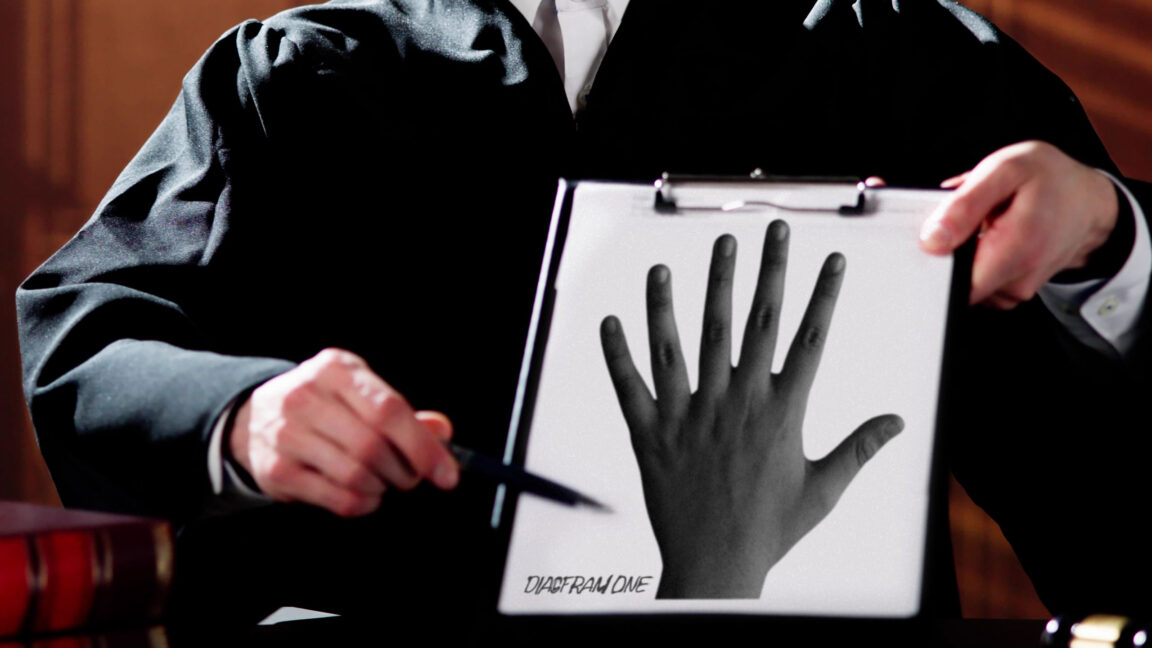

In fact, hallucinations are getting worse, not better. And the bigger issue is that AI is now quietly woven into the background of nearly everything we read: articles, presentations, reports, sometimes even court filings.

That’s why I think we’ll start seeing a shift where citations aren’t just taken at face value anymore. Judges, editors, and program officers need to start asking: “Did you actually check this, is this citation real?”

The good news? There’s a growing wave of tools designed to help with exactly that:

- Sourcely scans your writing and suggests credible, traceable sources, or flags weak ones that don’t hold up.

- Scite Assistant reviews your citations and shows whether later research supports or contradicts the claim.

- Perplexity isn’t perfect, but I often use it as a quick smell test when an AI-generated response feels a little too slick.

- LegalEase, Westlaw, and other legal tools are already helping firms and courts catch citation errors before they make it into the record.

Just because a model says it, and even if it drops a polished citation, doesn’t mean it’s true. We used to say, “Just take a look, it’s in a book.” Now? You better take a second look, and make sure that book actually exists.

If your work is making claims – especially ones tied to legal precedent, public health, or science – then checking those claims has to become part of the workflow.

Truth still matters. And in the age of generative content, fact-checking is the new spellcheck.