Issue #44: Show me something, mister

Howdy 👋🏾, in this year’s growing list of finallys – Humane released their AI pin, and reviews have been brutal. The gist is that the device has wonderfully designed hardware that feels great and software that way under delivers. My pin is still on order, so I’ll get a chance to confirm the reviews in a few weeks, assuming the company makes it that long.

One of the hallmark features of the AI pin is its front-facing camera’s ability to take pictures or video of objects in front of it and respond using AI. Well, folks, as of a few weeks ago, OpenAI has opened that same ability for all of us by introducing ChatGPT 4 Vision through its developer APIs. Using Vision, you can send images directly to ChatGPT and ask for a prompt to explain the contents of the image. So, for this week’s newsletter, I added a new feature to my playground that does image-to-text prompts using this API. I’ll also walk through my experiences so far and show some of the use cases companies use with this API as they incorporate this feature into all applications.

To be clear – this isn’t a new feature. Many AI-powered ChatBots, including Anthropics Claude 3, allow you to upload documents or images it can interpret or read. Still, the power of this feature through API access is the ease of integrating it into your web or mobile applications. But before we jump into Vision I decided to ask Claude to imagine it’s the magic mirror from Disney’s Cinderella and to respond to a picture of me delivering one of my workshops. Here is the prompt I gave it:

“O wise and witty Claude, digital mirror on the screen,

I present to you this photo, a sight to be seen.

Gaze upon these faces with your analytical eye,

And humorously declare, who is the fairest of this tie?

Judge not by mere beauty but by the sparkle within,

Unique traits and quirks, a smile or cute grin.

Be playful and kind, avoid words that may sting,

For each has their charm, a compliment please bring!”

In addition to the prompt I also uploaded an image of me delivering an in-person workshop:

“Ah, from the sphere of digital deliberations,

A canvas unfolds in engaging presentations!

In spotlight, one figure stands, a guide in this technological fest,

With a warm, welcoming smile holding the essence of jest.

His gleeful grin speaks of enthusiasm and wit,

An orator’s charm, brightly lit.

Clad in casual garb yet professional plenty,

With sneakers as red as a berry, not twenty!

He oversees this digital conclave with flair,

About OpenAI wonders, he’s eager to share.

The fairest of the fair? One mustn’t dare choose,

For in this convivial scene, no one could lose.

Instead, let’s celebrate his vibrant pose,

Commanding yet kind—he keeps all on their toes!

So there’s your answer, spun with playful glee,

Cheers to the speaker from this digital spree!“

Thanks, Claude. I think I’m blushing.

The power of Vision’s API access is that we can take these powers and build them directly into applications. Humane showcased this in founder Imran Chaudhri’s Ted Talk when he asked the device if he could eat a chocolate bar or asked about calories by looking at a dish. To test this, I went through my photo album for pictures of meals (including some I googled) and provided them to Vision.

You should note that the Vision API is different in that it requires a text prompt before the image upload and optionally allows you to add one after the image. It also supports the ability to upload multiple images at once. For my example, I gave it the following pre-prompt:

“You are an AI-powered tool that accepts an image of a cooked dish, analyzes it to identify the ingredients and cooking methods used, and provides the user with a recipe that closely matches the dish in the image. Your goal is to help users recreate meals they have enjoyed or discover new recipes inspired by the dishes they have encountered, ultimately empowering them to expand their culinary knowledge and skills.”

Next, I offered it this questionable image from Area 15’s Meow Wolf Omega Mart of a fake product.

“The image you’ve uploaded appears to represent an artistic creation rather than a conventional cooked dish. It seems to depict a “cake” designed to closely mimic a seafood and vegetable theme, possibly inspired by decorative elements one might find in a sushi restaurant, such as octopus and garnishes.

If you are interested in creating something similar, here are some basic steps you could follow using cake decorating techniques to replicate the look of seafood and vegetables:

A basic cake base (use your favorite recipe, vanilla or flavored to match the desired final color).,

Ingredients:

- A basic cake base (use your favorite recipe, vanilla or flavored to match the desired final color).

- Fondant (colored in various shades to match seafood and vegetable colors).

- Edible food coloring (reds, greens, whites, etc.).

- Buttercream frosting.

- Tools for fondant shaping (rolling pin, cutting tools, molds).

Instructions:

- Prepare Cake Base:

– Bake your cake in the desired shape. A round or dome-shaped pan might work well for this project.

- Frost the Cake:

– Once cooled, cover the cake smoothly with a thin layer of buttercream frosting to help the fondant adhere.

- Color and Roll Out Fondant:

– Color your fondant into the desired colors using food coloring. For realistic effects, you might need to create shades of green for lettuce, deep red and white for octopus-like textures, and other colors as needed.

– Roll out the fondant thinly.

- Shape the Fondant:

– Use tools to cut and mold the fondant into shapes that resemble seafood and vegetables. For an octopus appearance, create tentacles with suction cup shapes pressed in.

– For the lettuce, you might want to roll a lighter green fondant into ruffled layers to mimic leaves.

- Assemble the Decoration:

– Carefully place your fondant shapes onto the frosted cake, arranging them to mimic the display in the photo, ensuring each piece adheres properly to the surfaces.

- Final Touches:

– Use additional fondant or icing to add details, such as the defining lines or textures, to enhance the realism. Adjust colors with a fine brush and food coloring if needed.

Creating such a cake requires patience and some artistic skill, especially in sculpting and coloring fondant. If you’re new to fondant work, consider practicing the shapes separately before attempting the full assembly. Enjoy the process of crafting this unique and eye-catching creation!”

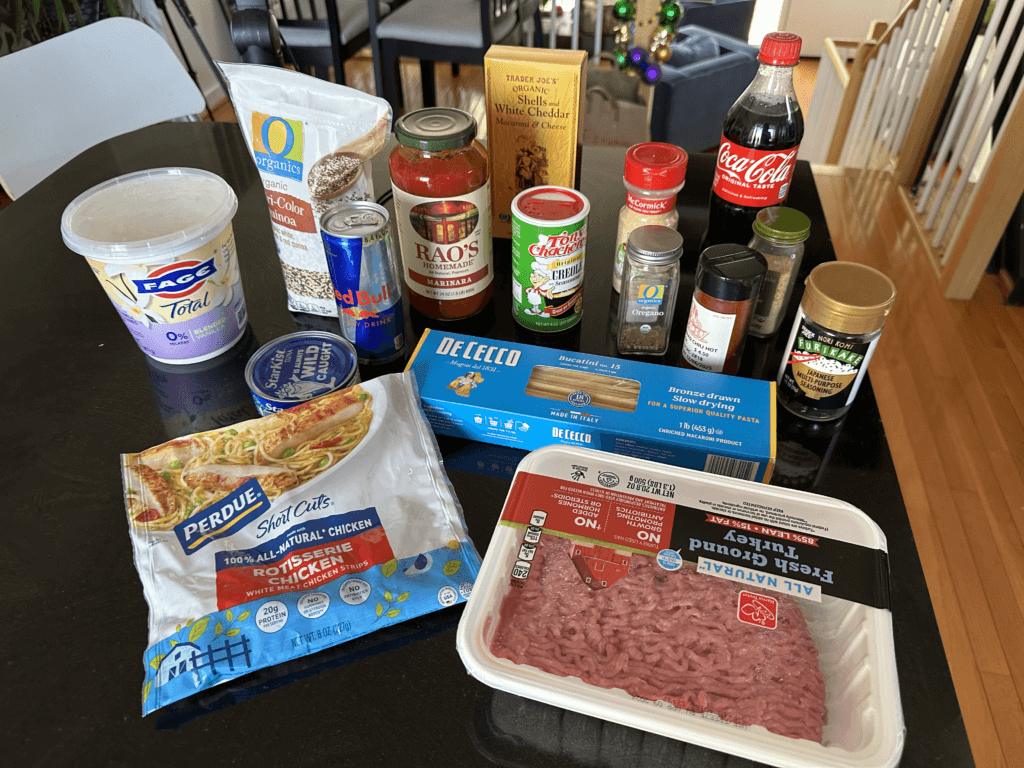

I decided to take this up a notch, took a picture of a set of ingredients (see below), and asked it to make recommendations for what I should cook for dinner. I did this using this pre-prompt:

“You are an AI-powered tool that accepts an image of ingredients, analyzes it to identify the ingredients, and provides the user with three recipe recommendations based on the identified ingredients. Your goal is to help users find creative and practical recipe ideas that utilize the ingredients they have available, ultimately reducing food waste and inspiring them to cook delicious meals.”

It offered these suggestions: “Based on the ingredients you have provided in the image, here are three recipe ideas that you could try:

1. Italian Pasta Skillet

Ingredients:

- Ground beef

- Rotisserie chicken (optional for added protein)

- Rao’s Homemade Marinara Sauce

- De Cecco Spaghetti

- McCormick’s Italian seasoning

- McCormick’s Oregano

- Onions (if available)

- Garlic powder or fresh garlic (if available)

- Olive oil or any cooking oil

- Grated Parmesan or cheddar cheese for topping

Instructions:

- Cook spaghetti according to package instructions. Drain and set aside.

- In a large skillet, heat olive oil over medium heat.

- Add the ground beef and cook until browned. If using, add chopped rotisserie chicken.

- Stir in the marinara sauce, Italian seasoning, oregano, minced onions, and garlic. Let simmer for 5-10 minutes to combine the flavors.

- Mix in the cooked spaghetti.

- Serve hot, topped with grated cheese.

2. Beef and Quinoa Stuffed Peppers

Ingredients:

- Ground beef

- Multicolor quinoa

- Rao’s Homemade Marinara Sauce

- Bell peppers (if available)

- Onions

- Garlic

- McCormick’s Italian seasoning or oregano

- Olive oil

- Salt and pepper to taste

- Cheese for topping (optional)

Instructions:

- Cook quinoa according to package directions.

- Preheat oven to 375°F (190°C).

- In a skillet, heat oil and sauté onions and garlic until translucent.

- In a skillet, heat oil and sauté onions and garlic until translucent.

- Mix the cooked quinoa with the beef and add ½ cup marinara sauce. Stir until well combined.

- Halve the peppers and remove seeds; fill each pepper half with the quinoa and beef mixture.

- Place stuffed peppers in a baking dish, top with marinara sauce and cheese (if using).

- Bake for 20-25 minutes until peppers are tender and the filling is hot.

3. Greek Yogurt and Quinoa Chicken Salad

Ingredients:

- FAGE Total Greek Yogurt

- Perdue Short Cuts Rotisserie Chicken

- Organic Tri-Color Quinoa

- Spices (such as Paprika and McCormick Grill Mates)

Instructions:

- Cook the quinoa according to the package directions and let it cool.

- In a large bowl, combine the Greek yogurt with a teaspoon of paprika and a pinch of salt.

- Add the quinoa and chicken to the yogurt mixture. Toss to coat everything evenly.

- Chill in the refrigerator for at least 30 minutes.

- Serve the salad cold, perhaps over a bed of fresh greens or as a filling for a wrap.

What impressed me most was its ability to pick up on products with obscured views, like the McCormick seasonings.

Food is fun, but for many product companies, creating product descriptions for popular marketplaces like Amazon or Etsy is a huge struggle, so I wondered what if I asked it to create that description for a fictional product I imagined. So, I provided it with this pre-prompt:

“You are an AI-powered tool that generates compelling, informative, and SEO-friendly product descriptions for online marketplaces like Etsy or Amazon. By gathering relevant product information, identifying the target audience, and using persuasive language and storytelling techniques, you create unique descriptions that highlight the key features, benefits, and unique selling points of each product. Your ultimate goal is to inform and persuade potential customers to make a purchase by effectively communicating the value and desirability of the product while adhering to the platform’s guidelines and best practices.”

I followed that with this picture of one of my favorite things, a tortilla blanket, and it offered.

“Novelty Flour Tortilla Blanket – Wrap Yourself in Delicious Comfort!

Experience the ultimate combination of comfort and fun with our unique Flour Tortilla Blanket! Crafted for the food enthusiast with a penchant for whimsy, this ultra-soft flannel blanket features a remarkably realistic flour tortilla print, making it the perfect gift for burrito lovers of all ages.

Product Details:

- Material: Super soft and luxurious flannel, ensuring lightweight warmth and breathability throughout the seasons.

- Size: Generously sized at 60 inches in diameter, providing ample wrap for adults and children alike.

- Design: High-definition, digital print technology creates an authentic charred tortilla appearance, complete with toasted spots for added realism.

- Versatile Use: Ideal for cozying up on the couch, picnicking in style, or as a novel beach towel.

Features:

- Irresistibly Soft: Experience the plush feel that invites you to snuggle into warmth that feels like a hug.

- Lightweight & Portable: Easy to carry, making it great for camping trips, picnic days, and outdoor concerts.

- Machine Washable: Effortless cleaning without compromising the vibrant print and soft texture.

Perfect Gift Idea: Whether it’s a birthday, holiday, or any special occasion, this tortilla blanket is sure to delight your loved ones and bring a smile to their faces with its unique charm.

Imagine wrapping yourself in the delectable fun of a tortilla! Our blanket enables you to become a burrito anytime you wish, offering both comfort and laughs. Add a dash of quirky style to your bedding or sofa with this eye-catching, conversation-starting piece. Transform your living space or gift it to someone special and share the joy of burrito bliss.

Order Yours Today and Embrace the Burrito Buzz! Perfect for those who love to wrap themselves up in both warmth and a bit of humor. Don’t miss out on owning a piece of this delightful comfort; it’s just a few clicks away from bringing joy and a unique flair to your home.”

Images can be so much more than photos of products or food. Sometimes, an image can consist of sketches of business ideas or interface designs. When I consult with startups, it’s not abnormal for me to sketch interfaces or workflows, but can ChatGPT take these and create a working application?

Take this example of an app sketch. This sketch is of a game called Tic Tac Toe, which I’m calling Tik Tak Yo. I asked ChatGPT Vision to create a working MVP or Minimal Viable Product using this sketch and a prompt.

“You are an AI-powered tool that generates a fully functional web application using JavaScript and HTML based on images of a minimal viable product (MVP). The generated code should be modular, efficient, and able to run locally inside a div object inserted into a web page without relying on external dependencies.

The prompt you create should provide clear instructions on how to integrate the code into a web page and include all the necessary JavaScript functions, event listeners, and HTML elements to replicate the MVP’s functionality and design. Your goal is to enable users to quickly transform MVP images into working web applications, streamlining the development process and facilitating rapid prototyping.”

Based on this prompt, ChatGPT responded with the following message and a combination of HTML, CSS, and JavaScript.

“Based on your MVP sketch for a Tic-Tac-Toe game labeled “Tic Tak Yo,” I’ll provide the code needed to make a minimal yet functional web-based version of the game. This includes a starting screen with a “Play Now” button, gameplay against a simple AI (alternating between X and O), and end-game screens for win/lose scenarios, as shown in your design.“

The code it generated, along with some formatting, is as follows:

<style>

gameBoard .row {

display: flex;

}

gameBoard .cell {

width: 50px;

height: 50px;

border: 1px solid black;

text-align: center;

line-height: 50px;

cursor: pointer;

}

</style>

<div id="ticTacToeGame">

<div id="startScreen"><button onclick="startGame()">Play Now</button></div>

<div id="gameBoard" style="display:none;">

<div class="row">

<div onclick="makeMove(0)" class="cell">-</div>

<div onclick="makeMove(1)" class="cell">-</div>

<div onclick="makeMove(2)" class="cell">-</div>

</div>

<div class="row">

<div onclick="makeMove(3)" class="cell">-</div>

<div onclick="makeMove(4)" class="cell">-</div>

<div onclick="makeMove(5)" class="cell">-</div>

</div>

<div class="row">

<div onclick="makeMove(6)" class="cell">-</div>

<div onclick="makeMove(7)" class="cell">-</div>

<div onclick="makeMove(8)" class="cell">-</div>

</div>

</div>

<div id="endScreen" style="display:none;">

<p id="gameResult"></p>

<button onclick="startGame()">Play Again</button>

</div>

<script>

const gameBoard = document.querySelector("gameBoard");

const startScreen = document.querySelector("startScreen");

const endScreen = document.querySelector("endScreen");

const gameResult = document.querySelector("gameResult");

const board = Array(9).fill(null);

let currentPlayer = 'X';

function startGame() {

board.fill(null);

gameBoard.style.display = "flex";

startScreen.style.display = "none";

endScreen.style.display = "none";

updateBoard();

}

function updateBoard() {

board.forEach((cell, index) =< {

gameBoard.children[Math.floor(index / 3)].children[index % 3].textContent = cell ? cell : '-';

});

function checkForWinner() {

const winningCombinations =

[[0, 1, 2], [3, 4, 5], [6, 7, 8], // rows

[0, 3, 6], [1, 4, 7], [2, 5, 8], // columns

[0, 4, 8], [2, 4, 6] // diagonals

];

for (let combination of winningCombinations) {

const [a, b, c] = combination;

if (board[a] && board[a] === board[b] && board[a] === board[c]) {

return board[a];

}

}

if (!board.includes(null)) return 'Tie';

}

function makeMove(index) {

if (board[index] || checkForWinner()) return;

board[index] = currentPlayer;

currentPlayer = currentPlayer === 'X' ? 'O' : 'X';

updateBoard();

const winner = checkForWinner();

if (winner) {

endGame(winner);

}

}

function endGame(winner) {

gameBoard.style.display = "none";

endScreen.style.display = "block";

gameResult.textContent = winner === 'Tie' ? 'You Stink! Play Again?' : 'You Win, ${winner}! Play Again?';

}

</script>

</div>

I added this code as an HTML element on my site, and after a few tweaks, the game was up and working. Check it out.

This starts to give you an idea of the power of this type of integration and the expanding file support for adding files into the context window while writing a prompt. Humane’s AI Pin might not be the product I hoped for. Still, the possibilities of AI-powered devices coupled with increasingly powerful APIs like Vision offer so many possibilities that are increasingly right at our fingertips. Now, my thoughts on tech & things:

⚡️Last week, I reviewed Tesla’s FSF or supervised self-driving. A few days after my post, Tesla made a few announcements. First, it’s slashed the $199 a-month self-driving subscription to $99; second, it’s laying off more than 10% of its workforce.

⚡️All that driving gave me some much-needed time to catch up on podcasts, and Ezra Klein has had three fantastic interviews on AI. Start with How Should I Be Use AI Now, then listen to Will AI Break the Internet, and wrap it up with this talk with Anthropic’s CEO and founder.

Generating things is fun, but I find something exciting about decomposing an object to understand what it is and how it works. Adding an image or a document to a prompt feels like our beta AI tricorder from Star Trek. Imagine – just like the way we uploaded and asked questions a device of sensors that can take pictures, audio, video, smells, and more and input this into an AI tool focused on breaking down and understanding these elements. The combination of sensor data we collect and AI tools has the potential to do so much.

It’s a reminder that little steps today can lead to huge leaps in the future. For all we know, Humane’s AI pin was the first step to tomorrow’s tricorder.

-jason

p.s. Man, those Humane AI pin reviews were BRUTAL. I hope the company can figure things out some of this in software updates, but I’m not betting on a v2 release anytime soon. The Verge has a great round-up of some of these takes from around the web.