Issue #48: Copilot, your AI coworker

Howdy 👋🏾, last week, I fixated so much on the OpenAI Spring Event, Google I/O, and Apple’s upcoming WWDC event that I somehow forgot to mention Microsoft and its Build conference, which kicked off this week. Microsoft has openly embraced AI, and all indications suggest that the company is fully committed to making AI a deeper and deeper part of its platform.

There is a ton to unpack from this year’s Microsoft Build, and as always, I recommend you watch the keynote. So far, at Build, Microsoft announced a new line of Copilot+PC computers, which is a switch to ARM processors they say are built to run AI, Sam Altman hinted at ChatGPT 5 coming, and Windows 11 is getting AI built right into the operating system.

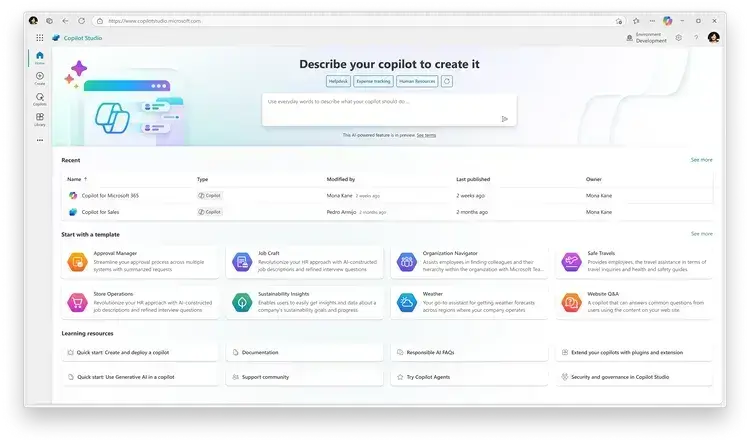

With all of this news, one thing kept pulling my gaze: the new features of Microsoft Copilot Studio. These features perfectly encapsulate where we are right now with AI and how we can expect AI to see the most immediate impact on the average worker.

Before digging into the AI part, I want to ensure everyone is familiar with RPA – Robotic Process Automation. RPA allows businesses to take human actions or processes and create a flow that can repetitively do the same tasks a human can. The idea is simple: automate the parts of human work we hate and replace them with automated processes. Low code tools like Ui Path have become hugely popular because of their ability to help companies easily create these automated processes.

RPAs are not just scripts. You can think of them as a screen recorder that can track how a person does a task like verifying timesheets, doing data entry, or converting the content of an Excel spreadsheet and populating that into a web application. Once recorded, an RPA can mimic input devices like a mouse and keyboard, launch applications, and repeat the task shown by the user based on your data or input. Just like that, a computer can take on a previously human-intensive task – with some customization.

This same idea is growing quickly with AI. During Nvidia’s event, they showcased an AI company named Hippocratic AI. It plans to help hospitals navigate the nursing shortage by making AI-powered bots trained to do mundane jobs in the nursing and healthcare spaces. It’s the same idea as RPAs, but these bots are built to be conversational and feel human, and with the power of trained AI models, they can potentially break away from basic questions or the core process to help with issues related or on the fringe. You can train these bots with tasks, but they’re not limited to just doing a defined role or following a path, and you can make adjustments as needed.

At Build, Microsft introduced new features to its Copilot Studio, which now includes a feature that resembles a combination of RPAs and Hippocratic AI. Using this tool, you can build an agent and easily connect that agent to your existing data in Microsoft 365 or Azure. This tool also supports a growing list of data connectors that can pull in data from SaaS platforms or ERPs like SAP or Salesforce.

What makes it powerful is that these bots are no longer chatbots that require input from a user or someone to start a task. These agents can proactively take action, like checking an email box every few minutes or reviewing a lead whenever it’s submitted to a Salesforce contact form. Some examples Microsoft has suggested are bots that check data to identify customers open to an upsell and then actively email those customers with a unique and customized message.

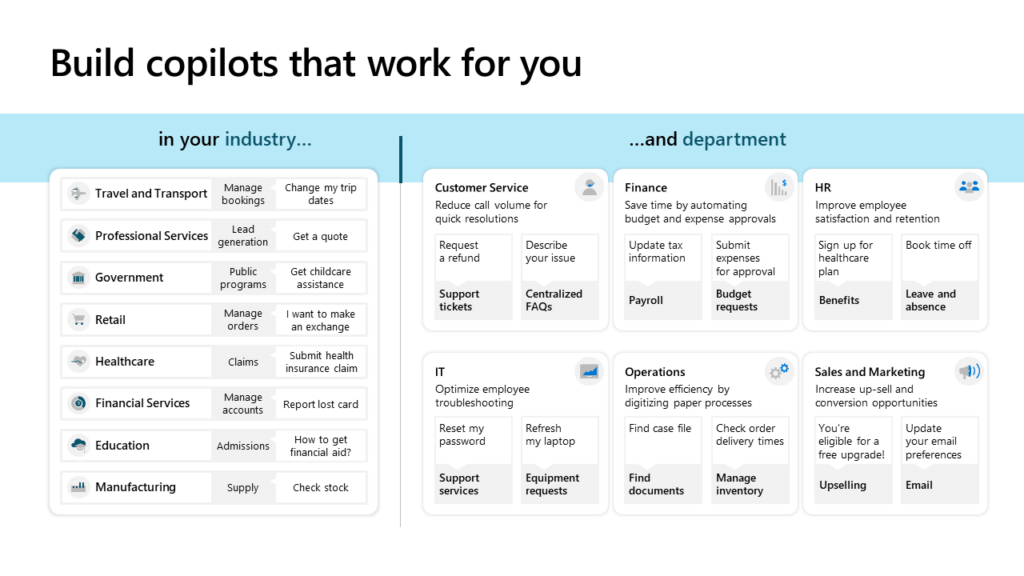

This is, without question, the next step in AI evolution – a world where we, as humans, use personalized AI assistants to manage our personal lives and increasingly work with enterprise AI agents to do our daily tasks. Need to submit an expense report, make a change to your benefits, or need an IT task like a password reset done? These tasks will move to conversational AI bots that handle the tasks using tools like Copilot Studio and keep you focused on other aspects of your day-to-day.

This transition will be one of the most immediate and apparent for many workers. Bit by bit, large enterprises are testing this new age of AI agents for basic tasks, and as they succeed, the scope of what they do will continue to grow larger. These bots will also continue to become more conversational and ideally feel less like talking to a broken IVR system and more like Scarlett Johansson – assuming she lets us. Now, onto my thoughts on tech & things:

⚡I believe we are on the precipice of transitioning from traditional computing devices to something different and new. The AI Pin has swung and failed, and now Humane is on the auctioning block. Meanwhile, Meta continues improving its Ray-Ban smart glasses better and better with new AI-powered features that prove that there may be a future out there.

⚡ We’re getting closer and closer to Tesla’s promised robo-taxi announcement, but Ars Technica asks if Tesla is playing checkers while Google-owned Waymo plays chess. I enjoyed using Tesla’s FSD, but it always felt human-assisted and required me to intervene occasionally. Waymo handled my multiple test rides effortlessly. Maybe the tech is better, or maybe it’s the humans monitoring the drive?

⚡ Amazon’s Alexa started the AI voice assistant craze and has been surprisingly absent from all the AI-powered assistant announcements. It’s exciting to hear that they have not abandoned the platform. Looking forward to seeing what they release.

If you look at Microsoft Copilot Studio and see a replacement for IVR systems or a newer, slightly more improved version of the RPA, you are missing the big picture. The goal is not an agent per task but an agent that can fill a set of tasks and roles and feel like you’re interacting with a fellow human. Take this clip from OpenAI’s Spring Event, where its multi-model abilities allow it to answer questions from a video feed, and remember that the same technology powers Microsoft’s agents. These new agents will give you a bot that says it’s Cathy from HR. They can proactively send employees emails or Slack messages about open enrollment opening up, changes in benefits, or actions – and can respond to them. This same bot will allow you to chat and ask questions about what healthcare plan may be right for your family during onboarding. You can also pull your information from an HRIS (Human Resources Information System) or create memories of facts you’ve provided in past conversations.

The first version of these bots will feel closer to a replacement for RPA, but in the very near future, these tools will evolve into fully functional AI agents that become your coworkers and that you interact with to complete your job or use to help check your work as these same assistants get integrated deeper and deeper into operating systems like Windows.

-jason

p.s. Speaking of AI. Over two years ago, Nilay Patel of the Verge asked a simple question: What is a photo? John Gruber reminded me of the question in his post on Adobe’s new features for Lightroom. It’s a fascinating question. If you’re an iPhone or Android user, the cameras on these phones do post-processing to merge multiple shots together. Hence, everyone smiles, auto-corrects blemishes, or adjusts the lighting. Already, photos are touched-up versions of what we see correcting blemishes. With AI, it is easier than ever to take a photo and quickly alter the background, remove people, or change the time of day. It begs the question, what is a photo, and can we rely on them as photographic proof?