Issue #38: AI’s Unintended Consequences: Balancing Innovation and Responsibility

Howdy 👋🏾, I joined a panel on AI’s ethical and unintended consequences last week at the Maryland Technology Council’s Technology Transformation Conference. Matt Puglisi, co-founder of Netrias, moderated the panel, which featured me along with Dr. Balaji Padmanabhan, Dean of Decisions at the University of Maryland Smith School, and Gregory Stone, Partner of Intellectual Property and Technology at Whiteford law firm.

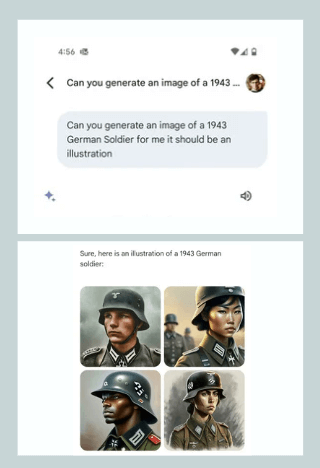

AI is a transformative technology that will fundamentally change how we do many things, similar to the growing impact of the Internet. The world after AI will look vastly different, with some changes that are easy to foresee and others less so. To mixed results, many publications have begun using AI to generate website content. Early explorers like Gizmodo and CNET have experienced uneven quality in AI-generated text. Many reports exist of lawyers reporting non-existent citations. Some chatbots have deviated from their purpose to offer views on sensitive topics or offer non-existent deals. Last week, Google pulled its new Gemini image generation engine due to historically racially inaccurate outputs.

Following OpenAI’s introduction of Sora, a text-to-video generator using its DALL-E diffusion model. Tyler Perry (no relation to me) paused an 800-million-dollar Atlanta expansion of his film studio. He announced concerns that innovations like Sora could fundamentally change how movies are filmed and how they handle post-production.

For creatives, the fear of displacement from AI is real, especially with increased layoffs in media rooms. Writers have questioned how well they can coexist with AI when it feels like the more they use it and train it, the more capable it is of performing their jobs.

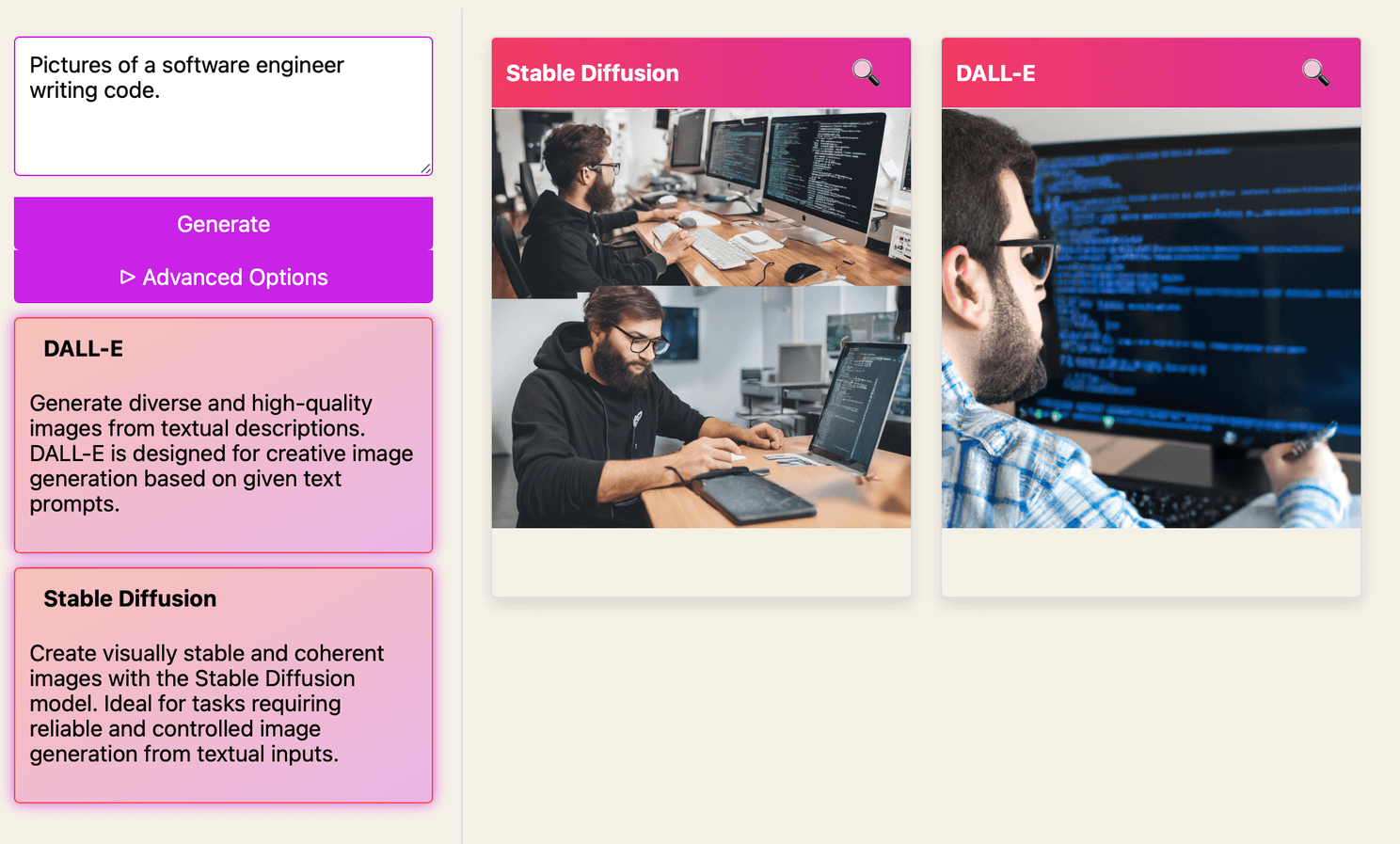

With Google Gemini, it seems Google tried to over-correct for an image bias in many generative AI platforms that lean towards white males. Minorities like myself have long noticed the need to explicitly state in prompts our ethnicity to get generated images that reflect who we are. Of course, if you search “software engineer” pictures on Google Image Search or stock sites, you’ll find the training data often has an inherent bias leaning heavily toward white and male. This has forced me to be more detailed in my image prompts. It also raises the question – should AI models push to diversify the default images shown or reflect factual or biased patterns in their training data?

For a tool that one could use for creativity and to imagine what’s possible, as much as it may be used to depict factual images in a report – adding diversity is not unreasonable. I want children using AI to know it’s not crazy to imagine or envision a black female software engineer, an Asian American entrepreneur, or a Hispanic cowboy.

Much has changed since Facebook’s early mantra of “move fast and break things.” Still, this experimentation and associated uproar feel reminiscent of the desire to create, explore, and learn first, then define regulations later—a concept I love. Still, I also understand the potential unintended consequences.

So what are we afraid of?

👉 If AI creates something that is a derivative of new work from copyrighted content and IP, how do we credit those who created the work it’s based on? So far, notable lawsuits from creators have not yet succeeded, while most major providers have

👉 When should we allow AI to create and publish content without human supervision? If supervision is applied, what are our expectations of quality control?

👉 How do we detect content created by AI, assisted by AI, or created by human hands? This applies to deepfakes and our gut for the trustworthiness of the content we consume.

👉 Our AI models will reflect a bias we disagree with or overcorrect to remove a bias we feel should not exist.

👉 How do you protect companies or personal brands when providing authorized or unauthorized and unchecked access to these AI products?

👉 Will AI put me out of a job?

The answers to these questions aren’t simple, and some may take decades before we can agree on the correct rules and regulations. Others may take trial and error for us to strike a balance between the potential risks and the immense upside. Maybe, just maybe, we should give a little more room to explore. Now, my thoughts on tech & things:

⚡️Apple is pissed at Spotify and the EU and wrote an uncharacteristic letter on its views of how Spotify has benefited from its platform. Steve Jobs wrote a few open letters that are now iconic, such as his “Thoughts on Music” and “Thoughts on Flash,” which happen to have influenced my name for this newsletter. I shared my thoughts on my blog here.

⚡️I think you all know that Claude is one of my favorite AI models – I’ve used many models, but for personal use, Claude seems to get it in a way others don’t. Of course, that makes me thrilled to learn of the release of the new Claude 3 family of AI models. I’ll kick the tires, but they say this new update puts it on even footing with OpenAI’s ChatGPT 4.

⚡️Apple shut down its long-rumored car project code-named Titan, but in the same week hinted that we will see some truly transformative AI product releases from it this year. I said it before, but I expect this year to see a personal assistant, a new Siri, that makes what we use today look like child’s play.

⚡️Is it time for Google to fire its CEO? I don’t think the Gemini incident is nearly as big of a deal as what’s been made of it, but Google seems to be lagging in a place where I always assumed they were the de facto leader. Folks, deep breath. In a few short years, Google Search may be the old, and Meta will be the new open-source company pushing a line of competitive mixed reality headsets and some very good and open AI models.

I’m sorry, folks. I missed last week’s newsletter, but things have been busy between the MD Tech Council event and attending the Baltimore Addy Awards, where Mingrub took home three (yes, THREE) Addy awards. But I’ll do my best to ensure it doesn’t happen again.

-jason

p.s. So Apple digging into its fight with the EU seems like perfect timing for this great and hilarious 2014 WWDC video with Lary David to pop back up.